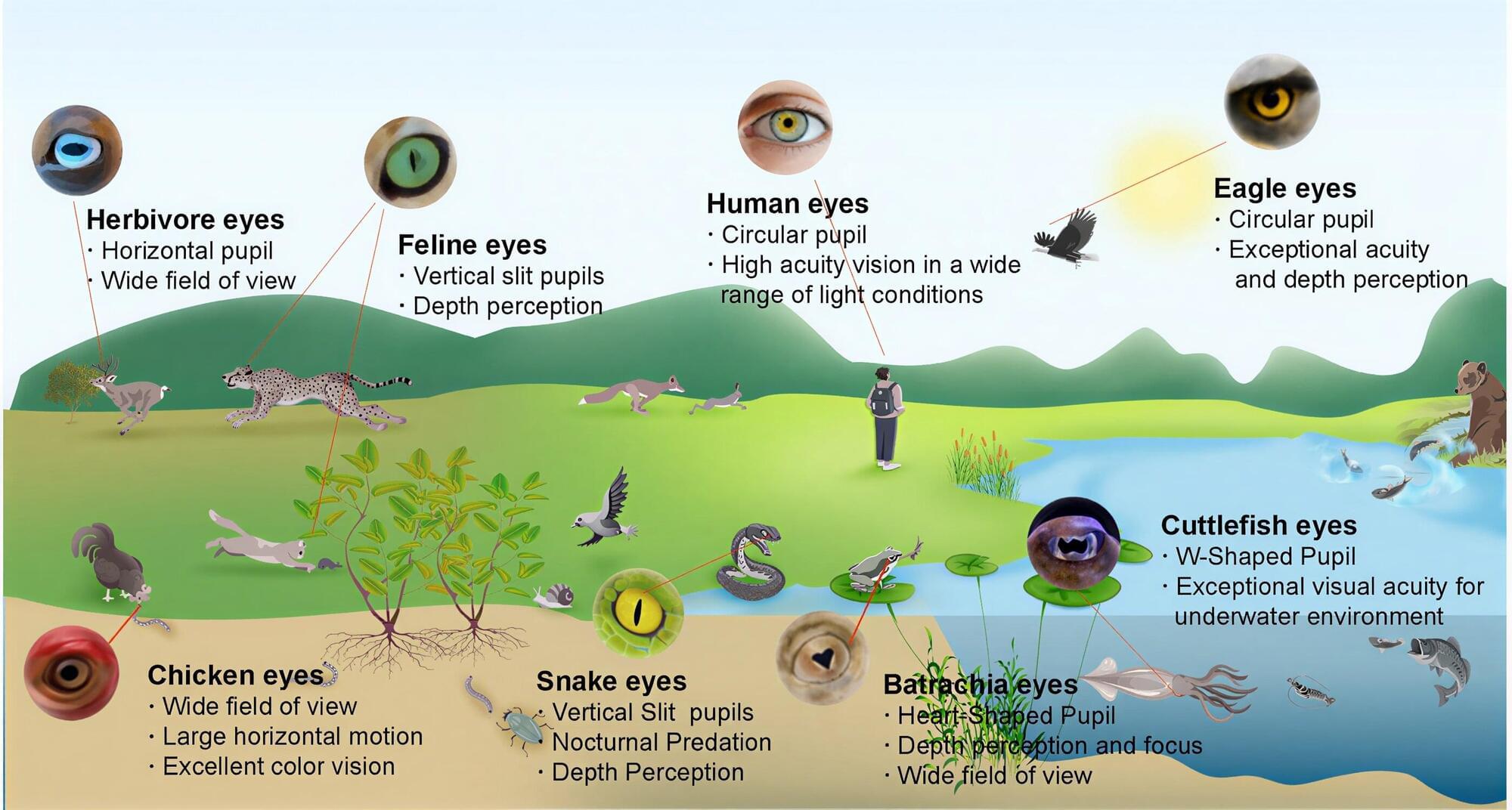

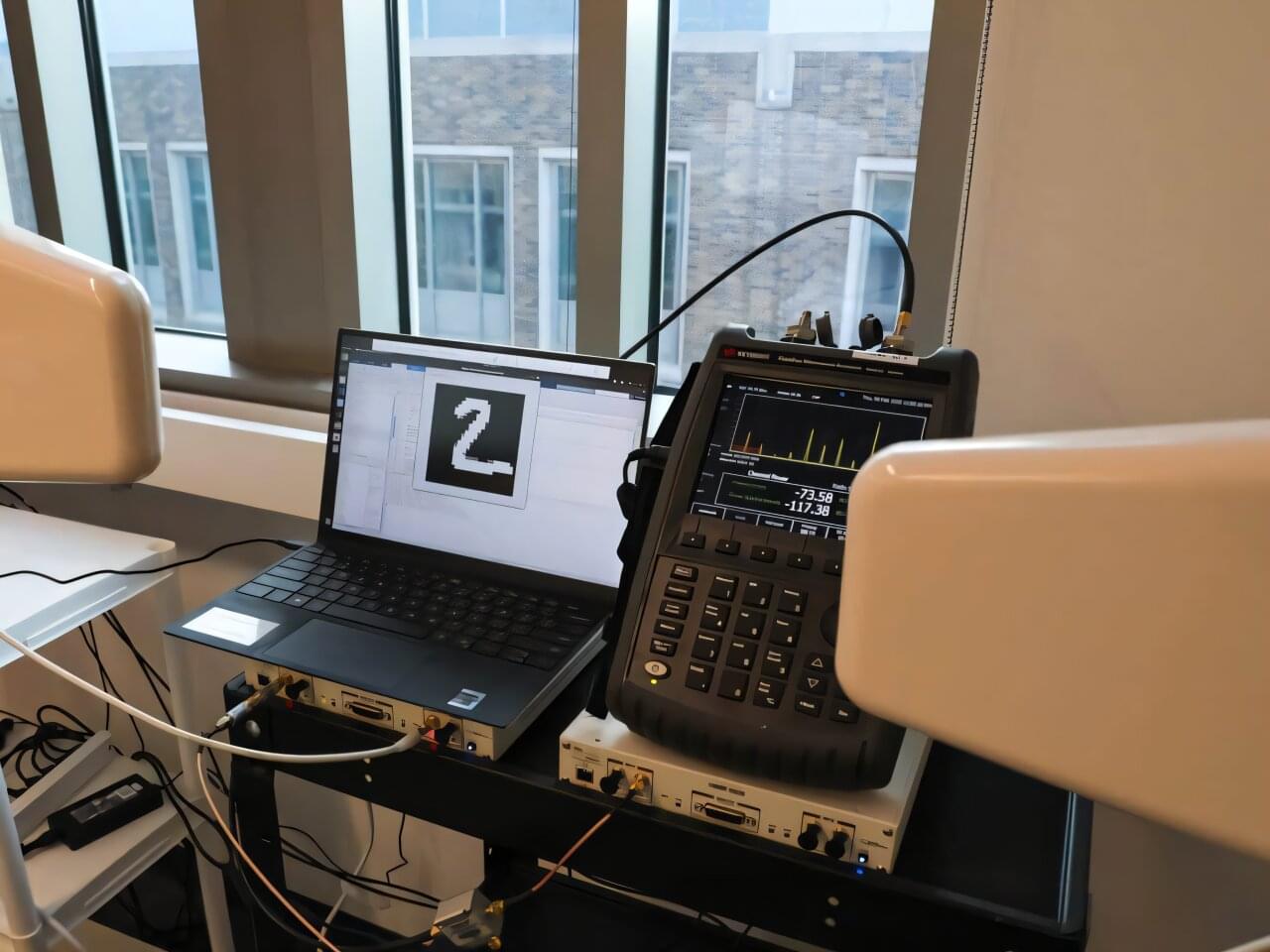

Robot vision could soon get a boost thanks to the development of a bioinspired eye that can automatically adjust its pupil size in response to changing light levels. Robots, self-driving cars and drones often struggle with dynamic lighting. If a car enters a dark tunnel, its camera aperture needs to stay wide open to capture enough light to see, just like our pupils do when the lights go out. But when it exits into daylight, it can be instantly blinded by the glare.

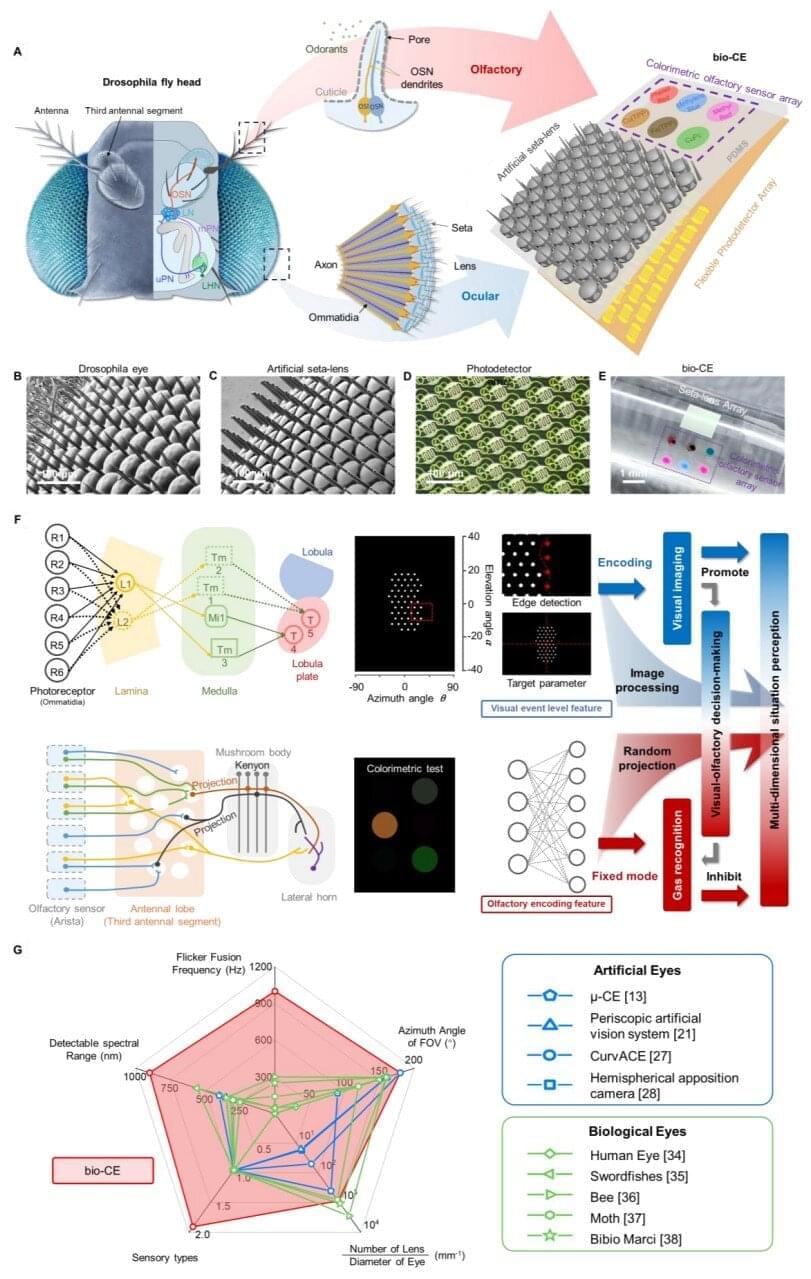

In a study published in the journal Science Robotics, researchers detail how they have created a bioinspired vision system that not only mimics the way eyes see but also adapts to light conditions. The technology is designed to bridge the gap between how a standard camera sees and how living creatures view their surroundings.

Cameras may excel at capturing high-resolution images, but in dynamic environments, they lack the flexibility to adapt.