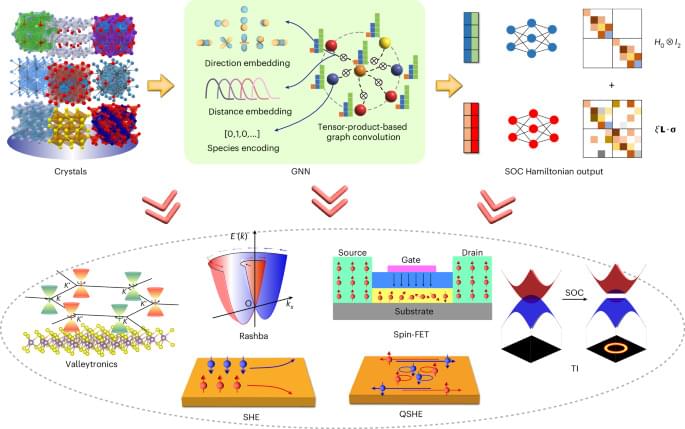

Zhong et al. introduce Uni-HamGNN, a graph neural network model that predicts spin–orbit-coupled electronic structures quickly and accurately, enabling fast screening and the discovery of advanced quantum materials across the periodic table.

My Patreon Page:

https://www.patreon.com/johnmichaelgodier.

My Event Horizon Channel:

Papers:

“Natural Selection Favors AIs over Humans”, Dan Hendryks, 2023.

And exploration of what may be the The Coming Age of Alien Communications, and how quantum computers and AI might alter the course of how that might unfold.

My Patreon Page:

/ johnmichaelgodier.

My Event Horizon Channel:

/ eventhorizonshow.

Papers:

⚠️⚠️⚠️Please note: The narration in this documentary is produced using advanced AI voice technology and is not voiced by a human narrator.⚠️⚠️⚠️

Sir David Attenborough: Have We Finally Solved the Fermi Paradox?

The universe contains hundreds of billions of galaxies. Each galaxy holds hundreds of billions of stars. Around many of those stars orbit planets — some potentially similar to Earth.

So where is everybody?

In 1950, physicist Enrico Fermi posed a simple yet unsettling question: if intelligent life is common in the cosmos, why have we found no evidence of it? This contradiction became known as the Fermi Paradox — one of the greatest mysteries in modern science.

In this immersive documentary, we explore whether recent discoveries in astronomy, astrobiology, and cosmology may finally offer an answer. From the staggering scale of the Milky Way to the discovery of thousands of exoplanets by missions like James Webb Space Telescope and Kepler Space Telescope, our understanding of the universe has transformed dramatically in just a few decades.

We examine the leading explanations: the Rare Earth hypothesis, the Great Filter theory, cosmic distance barriers, self-destruction scenarios, and the possibility that advanced civilizations may exist beyond our ability to detect them.

A new, fully customizable 3D printed socket design is set to transform the prosthetics industry. The reimagined limb socket interface combines highly personalized pressure mapping with AI software and a lighter infill, creating a highly customized prosthetic that’s more comfortable to wear, for much longer, say researchers at Simon Fraser University.

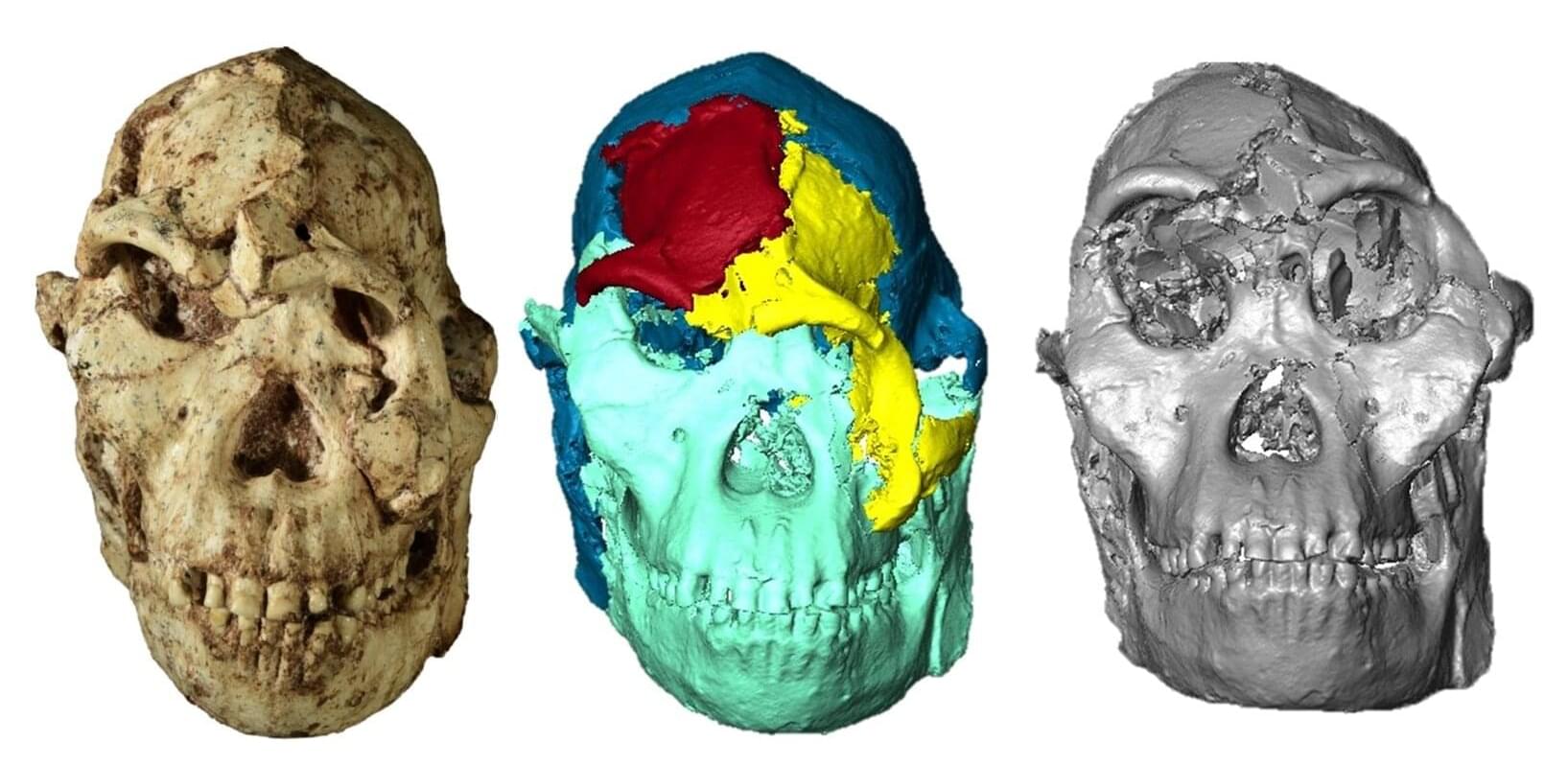

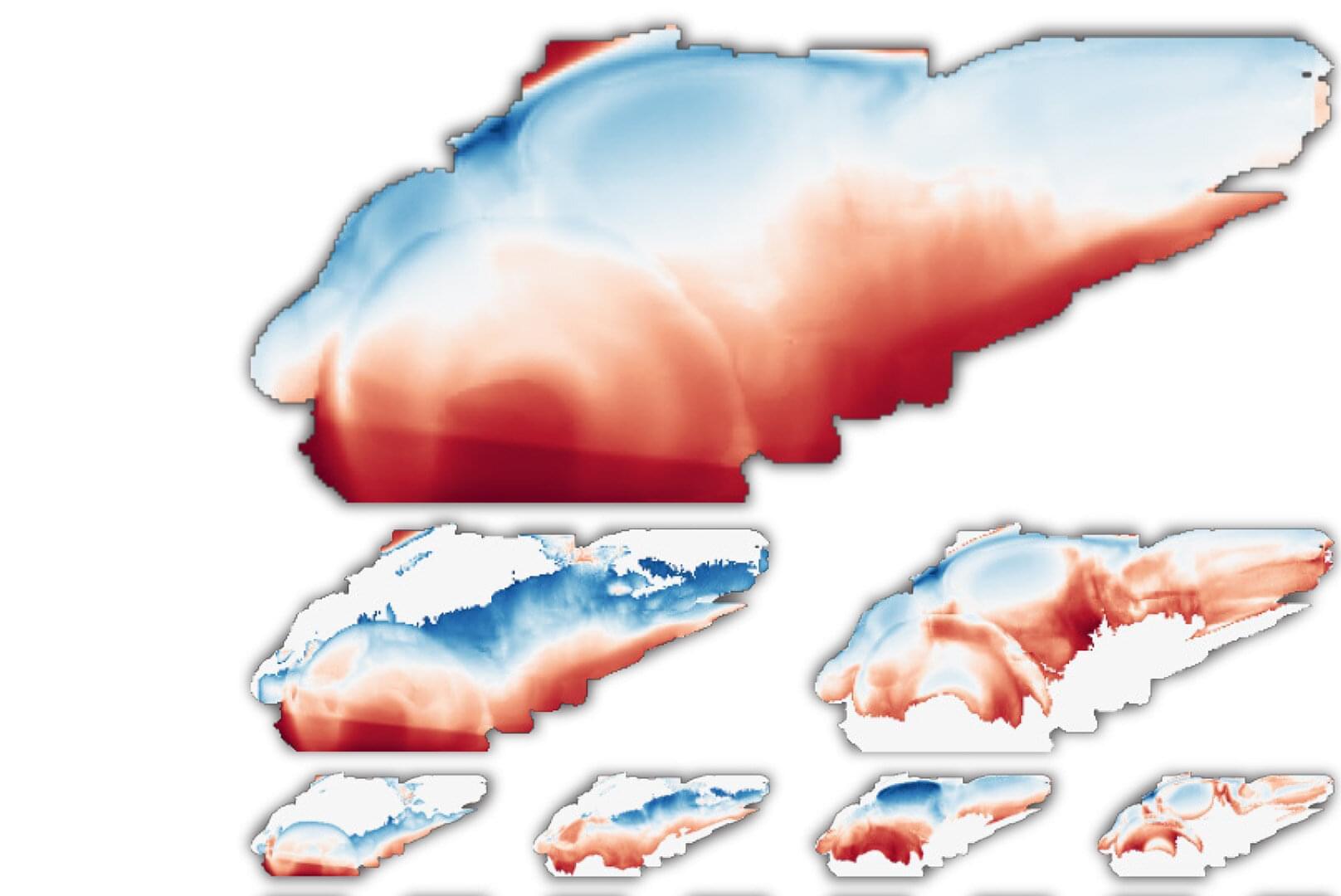

Identified as the most complete Australopithecus fossil discovered to date, “Little Foot” was buried in sediments whose movement and weight caused fractures and deformations, making analysis of its skull—and more particularly its face—difficult. This anatomical region, which is essential for understanding the adaptations of our ancestors and relatives to their environment, has now been virtually reconstructed for the first time by a CNRS researcher and her British and South African colleagues. These are published in Comptes Rendus Palevol.

A comparative analysis of this reconstruction with several extant great apes and three other Australopithecus specimens reveals that the face of “Little Foot” is closer in terms of size and morphology to Australopithecus specimens from eastern Africa than to those from southern Africa. This finding raises questions about the relationships between these different populations and about the chronology of the evolutionary processes that reshaped the faces of these hominins, particularly the orbital region, which appears to have been subject to strong selective pressures.

The skull was first transported to the Diamond Light Source synchrotron (United Kingdom), where it was carefully digitized. The research team then virtually isolated the bone fragments using semi-automated methods and supercomputers. Their realignment resulted in a 3D reconstruction with a resolution of 21 microns. More than five years were required to complete this reconstruction.

Your brain begins as a single cell. When all is said and done, it will house an incredibly complex and powerful network of some 170 billion cells. How does it organize itself along the way? Cold Spring Harbor Laboratory neuroscientists have come up with a surprisingly simple answer that could have far-reaching implications for biology and artificial intelligence.

Stan Kerstjens, a postdoc in Professor Anthony Zador’s lab, frames the question in terms of positional information. “The only thing a cell ‘sees’ is itself and its neighbors,” he explains. “But its fate depends on where it sits. A cell in the wrong place becomes the wrong thing, and the brain doesn’t develop right. So, every cell must solve two questions: Where am I? And who do I need to become?”

In a study published in Neuron, Kerstjens, Zador, and colleagues at Harvard University and ETH Zürich put forward a new theory for how the brain organizes itself during development.

Artificial intelligence (AI) systems are computational models that can learn to identify patterns in data, make accurate predictions or generate content (e.g., texts, images, videos or sound recordings). These models can reliably complete various tasks and are now also used to carry out research rooted in different fields.

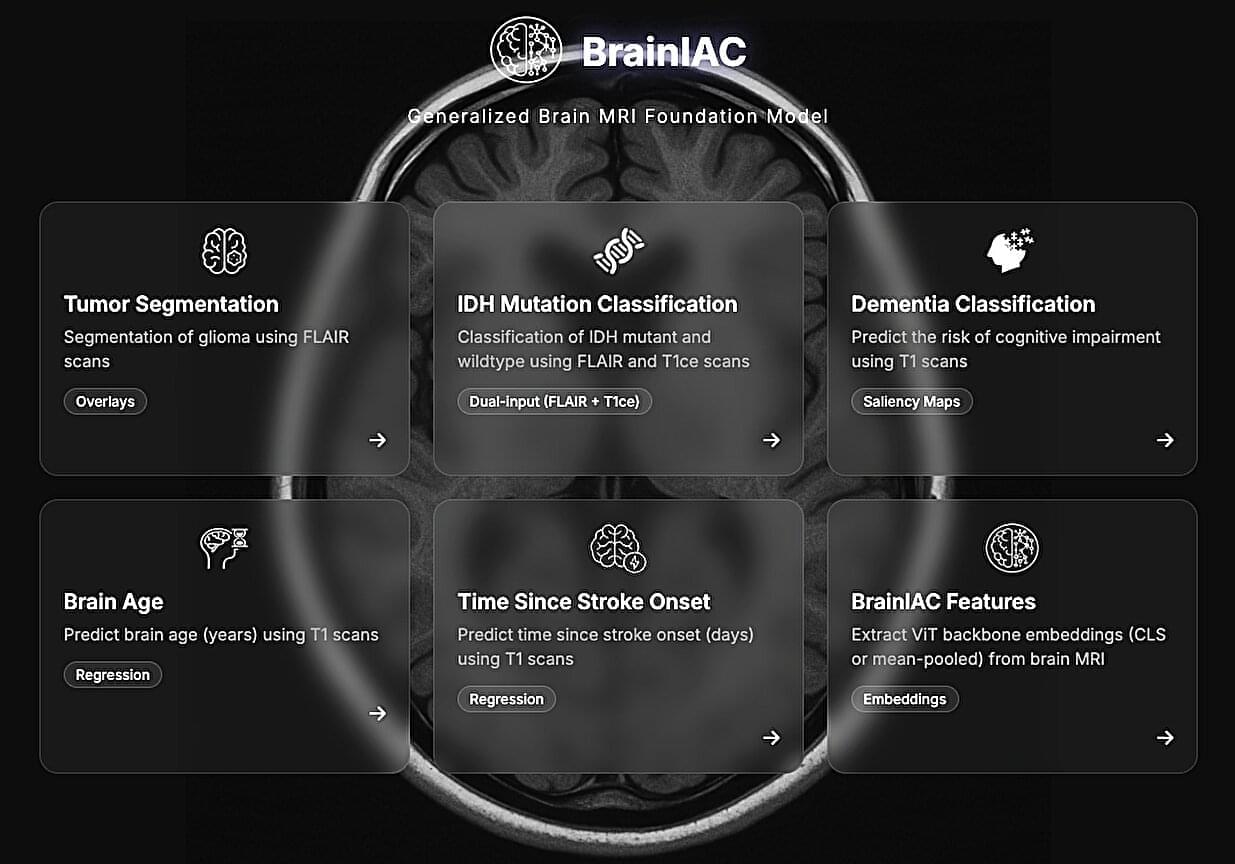

Over the past few decades, some AI models have proved promising for the early diagnosis and study of specific diseases or neuropsychiatric conditions. For instance, by analyzing large amounts of brain scans collected using a noninvasive technique known as magnetic resonance imaging (MRI), AI could uncover patterns associated with tumors, strokes and neurodegenerative diseases, which could help to diagnose these conditions.

Researchers at Mass General Brigham, Harvard Medical School and other institutes recently developed Brain Imaging Adaptive Core (BrainIAC), a large AI system pre-trained on a vast pool of MRI data that could be adapted to tackle different tasks. This foundation model, presented in a paper published in Nature Neuroscience, was found to outperform many models that were trained to complete specific medical or neuroscience-related tasks.